In this article, I’ll show you how to set up and run your application using ElasticSearch, Kibana and Serilog.

Before diving deep into implementation, let’s understand the basics of ElasticSearch, Kibana and Serilog.

You can find the source code here

What is ElasticSearch?

Elasticsearch is a distributed, open source search and analytics engine for all types of data, including textual, numerical, geospatial, structured, and unstructured. Elasticsearch is built on Apache Lucene and was first released in 2010 by Elasticsearch N.V. (now known as Elastic)

Sourced from https://www.elastic.co/

What is Kibana?

Kibana is an UI application that sits on top of ElasticSearch. It provides search and visualisation capabilities for data indexes in the ElasticSearch.

What is Serilog?

I have already written an in-depth article on Serilog, I highly encourage you to go through by clicking here

Why logging with ElasticSearch and Kibana?

Traditionally, we often use to create a flat file logging. It comes with few drawbacks

- Accessing the log file on the server is a tedious job.

- Searching for errors in the log file is quite cumbersome and time consuming.

These drawbacks came be rectified using ElasticSearch. It makes logging easily accessible and searchable using a simple query language coupled with Kibana interface.

Prerequisites

To move along, make sure you have the following installed

- Visual studio/ Visual studio code

- Docker Desktop

- .net core sdk 3.1

Project Creation and Nuget package

Let’s begin by creating an ASP.NET Core Web API application and give the project name as “ElasticKibanaLoggingVerify”.

After project creation, make sure you have the nuget packages installed

dotnet add package Serilog.AspNetCore

dotnet add package Serilog.Enrichers.Environment

dotnet add package Serilog.Sinks.Elasticsearch

Docker Compose of ElasticSearch and Kibana

Before jumping into implementation, let’s spin up the docker container for ElasticSearch and Kibana.

Docker supports single and multi-node ElasticSearch. Single node is recommended for development and testing; whereas, multinode for pre-prod and prod environment.

Create a new folder as docker and new file as docker-compose.yml.

version: '3.4'

elasticsearch:

container_name: elasticsearch

image: docker.elastic.co/elasticsearch/elasticsearch:7.9.1

ports:

- 9200:9200

volumes:

- elasticsearch-data:/usr/share/elasticsearch/data

environment:

- xpack.monitoring.enabled=true

- xpack.watcher.enabled=false

- "ES_JAVA_OPTS=-Xms512m -Xmx512m"

- discovery.type=single-node

networks:

- elastic

kibana:

container_name: kibana

image: docker.elastic.co/kibana/kibana:7.9.1

ports:

- 5601:5601

depends_on:

- elasticsearch

environment:

- ELASTICSEARCH_URL=http://localhost:9200

networks:

- elastic

networks:

elastic:

driver: bridge

volumes:

elasticsearch-data:

Note: While creating an article, the latest version of ElasticSearch and Kibana is v7.9.1. I highly recommend you to look at this link to ensure that you are working on latest version.

There is also easy way to create both ElasticSearch and Kibana using a single container command here.

Note: I haven’t tried with above container and running both ElasticSearch and Kibana in single container is not recommended in production environment.

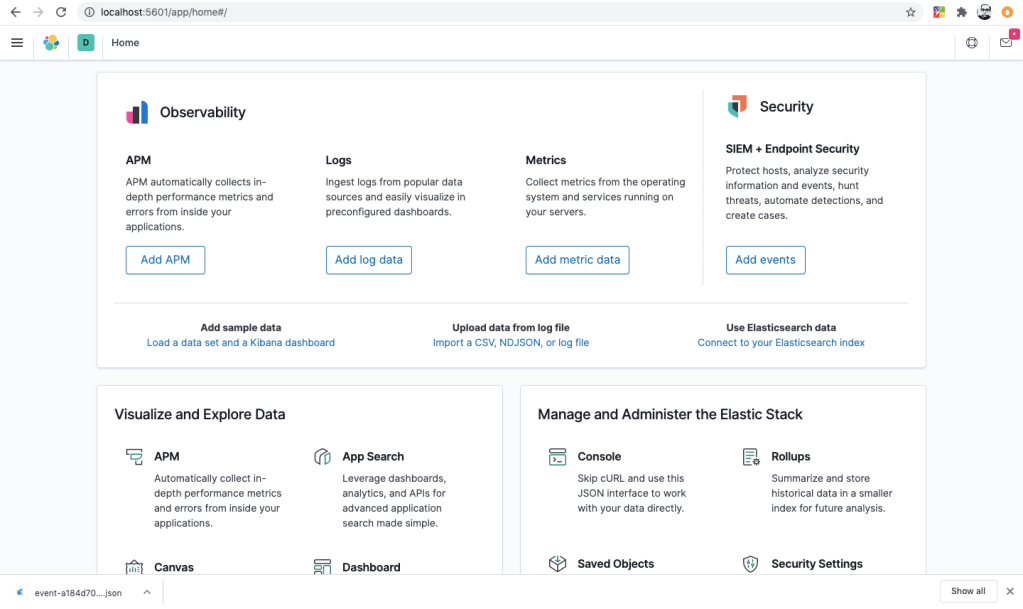

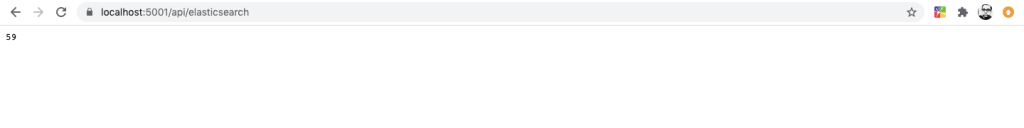

Verify that ElasticSearch and Kibana are up and running

Navigate to http://localhost:9200 for verifying ElasticSearch

Navigate to http://localhost:5601 for verifying Kibana

Removing the Out of box configuration for logging

As discussed in the previous article on Serilog, Out of the box logging configuration in appsettings.json is not necessary. Only below configuration is required from out of the box appsettings.json

{

"AllowedHosts": "*"

}

Now add ElasticSearch url in the appsettings.json

"ElasticConfiguration": {

"Uri": "http://localhost:9200"

},

"AllowedHosts": "*"

Configure logging

Next step is to configure logging in program.cs

using System;

using System.Reflection;

using Microsoft.AspNetCore.Hosting;

using Microsoft.Extensions.Configuration;

using Microsoft.Extensions.Hosting;

using Serilog;

using Serilog.Sinks.Elasticsearch;

namespace ElasticKibanaLoggingVerify

{

public class Program

{

public static void Main(string[] args)

{

var environment = Environment.GetEnvironmentVariable("ASPNETCORE_ENVIRONMENT");

var configuration = new ConfigurationBuilder()

.AddJsonFile("appsettings.json", optional: false, reloadOnChange: true)

.AddJsonFile(

$"appsettings.{Environment.GetEnvironmentVariable("ASPNETCORE_ENVIRONMENT")}.json",

optional: true)

.Build();

Log.Logger = new LoggerConfiguration()

.Enrich.FromLogContext()

.WriteTo.Elasticsearch(new ElasticsearchSinkOptions(new Uri(configuration["ElasticConfiguration:Uri"]))

{

AutoRegisterTemplate = true,

IndexFormat = $"{Assembly.GetExecutingAssembly().GetName().Name.ToLower()}-{DateTime.UtcNow:yyyy-MM}"

})

.Enrich.WithProperty("Environment", environment)

.ReadFrom.Configuration(configuration)

.CreateLogger();

CreateHostBuilder(args).Build().Run();

}

public static IHostBuilder CreateHostBuilder(string[] args) =>

Host.CreateDefaultBuilder(args)

.ConfigureWebHostDefaults(webBuilder =>

{

webBuilder.UseStartup<Startup>();

}).ConfigureAppConfiguration(configuration =>

{ configuration.AddJsonFile("appsettings.json", optional: false, reloadOnChange: true);

configuration.AddJsonFile(

$"appsettings.{Environment.GetEnvironmentVariable("ASPNETCORE_ENVIRONMENT")}.json",optional: true);

})

.UseSerilog();

}

}

In the previous article on Serilog, we have seen how important is the enrichment and the SinkOptions.

You can register the ElasticSearch sink in code as follows

WriteTo.Elasticsearch(new ElasticsearchSinkOptions(new Uri(configuration["ElasticConfiguration:Uri"]))

{

AutoRegisterTemplate = true,

IndexFormat = $"{Assembly.GetExecutingAssembly().GetName().Name.ToLower()}-{DateTime.UtcNow:yyyy-MM}"

})

Create a Controller to validate the behavior

You can create a controller to verify the logging details in Kibana

Route("api/[controller]")]

public class ElasticSearchController : Controller

{

private readonly ILogger<ElasticSearchController> _logger;

public ElasticSearchController(ILogger<ElasticSearchController> logger)

{

_logger = logger;

}

// GET: api/values

[HttpGet]

public int GetRandomvalue()

{

var random = new Random();

var randomValue=random.Next(0, 100);

_logger.LogInformation($"Random Value is {randomValue}");

return randomValue;

}

}

The above controller is self explanatory and generate random values between 0 to 100. Thereafter, I’m logging the random value using

_logger.LogInformation($"Random Value is {randomValue}");

Start logging events to ElasticSearch and configure Kibana

Now, run the Web API application by clicking on F5 and navigating to https://localhost:5001/api/ElasticSearch

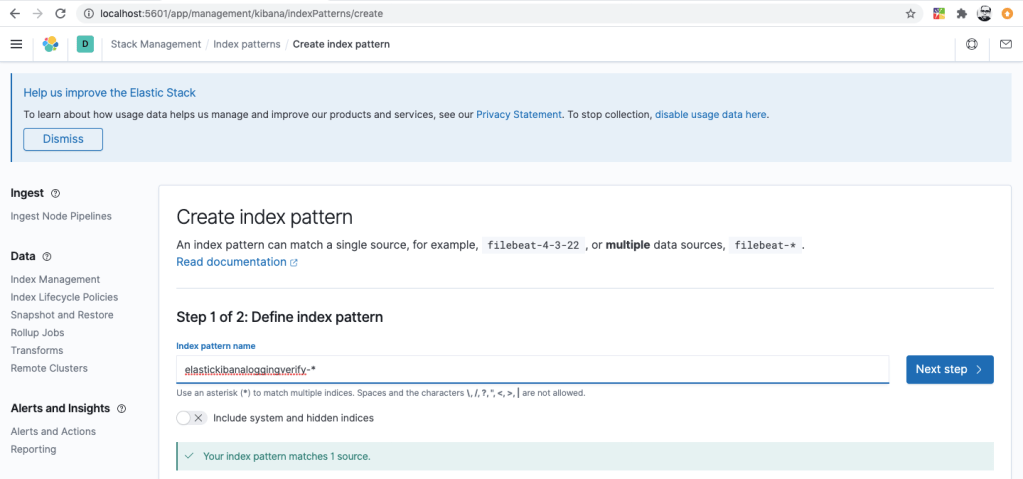

Now, let’s configure an index in Kibana

After creating an index, you can filter the message by using

message: "59"

Logging error to ElasticSearch

Let’s add a new HTTP GET method in ElasticSearch Controller

[HttpGet("{id}")]

public string ThrowErrorMessage(int id)

{

try

{

if (id <= 0)

throw new Exception($"id cannot be less than or equal to o. value passed is {id}");

return id.ToString();

}

catch (Exception ex)

{

_logger.LogError(ex, ex.Message);

}

return string.Empty;

}

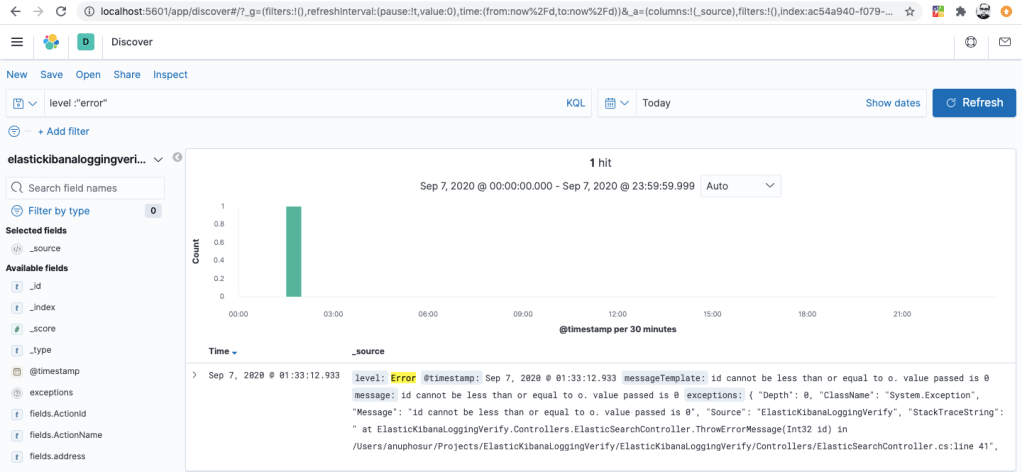

Above code is quite straightforward and self explanatory. Let’s test the ThrowErrorMessage method by passing id equals to 0.

You can narrow down to error log using

level:"error"

you can filter further by configuring multiple conditions

message: "value passed" and level:"error"

Conclusion

Earlier, setting up the logging was quite a tedious job and thereafter, getting the flat file from servers and identifying/searching the error is another pain.

With Docker in place, setting up the environment is effortless and especially with the MicroService architecture, it’s much easier to create the ElasticSearch indexes and the data that has been logged can be visualised using Kibana.

Logging can be even more powerful, when you perform the same setup in Kubernetes/AKS orchestration with multiple-nodes.

There’s really no excuse for developers/architects to not incorporate logging using ElasticSearch, Kibana and Docker.

Cheers!

I hope you like the article. In case, you find the article as interesting then kindly like and share it.

dont be a copy cat, or ur just sharing this worthless implementation!

LikeLike

What is copy cat here??

LikeLike

Pls be specific if you don’t like the article and I can improve

LikeLike